Measuring Understanding

Welcome to this year’s 2nd TRR Conference on “Measuring Understanding”.

Current XAI research is centring around solutions of how to achieve understanding. The topics include different methods and tools to assess and measure understanding in the context of (a) dyadic everyday explanations (b) the context of interpretability or explainability of AI systems, or (c) of institutional environments. Specifically, the focus of the conference is on the methodological challenge of how to measure and operationalise understanding in diverse explanatory settings including human-human interaction and human-machine interaction. Additionally, what are the implications of these measurements for XAI. Researchers from all around Europe are coming together to discuss recent challenges and topics how to measure understanding.

Program

Monday, | November 6 | Tuesday, | November 7 |

09:00 | RTG Conference (not public) | 09:00 | Keynote Talk by Niels Taatgen |

10:30 11:00 | Registration Start Conference Kick-off | 10:30 11:00 | Coffee Break Workshop Understanding |

12:30 | Lunch Break | 12:30 | Lunch Break |

13:45 15:15 15:45 | Keynote Talk by Sylvaine Tuncer Coffee Break Parallel Research Track 1 | 13:45 15:15 15:45 | Parallel Research Track 2 Coffee Break Parallel Research Track 3 |

17:15 18:15 | Get-together with Snacks Science Slam (seating starting at 18:00) | 16:30 17:15 | Oral Session Understanding Wrap-up |

Research Tracks & Understanding Workshop

Parallel Research Track 1 - Monday

15:45 - 17:15

CA and Ethnomethodology | Evaluating XAI via User Studies | AI for Education and Training |

|---|---|---|

Chair: Room: L2.202 | Chair: Room: L2.201 | Chair: Room: L1.201 |

What 'Counts' as Explanation in Social Interaction Speaker: | Evaluating Concept- and Relation-based Explanations for Image Classification Speaker: | From Machine Learning to Machine Teaching: How Human Can Learn form Explainable Artifical Intelligence Speaker: |

Understanding Beyond Measurement Speaker: | Evaluating a Multi-Modal Design for an Informative Take-Over Request in a Drone-Controller Setting Speaker: | AR-mediated Explainability for Teaching and Cooperation Speaker: |

Understanding Robots in Public: The Influence of Other Humans' Presence on Human-Robot Interaction Speaker: | Understanding Path Planning Explanations Speaker: | Collaboration with AI Technologies: AI-Developed Curricula in Language Education Speaker: |

A, B, C, It's Easy as 1, 2, 3 - Inviting Linguistic Complexity to the Process of Operationalizing Understanding Speaker: | What's happening right now? Passenger Understanding of Highly Automated Shuttle's Minimal Risk Maneuvers by Internal Human-Machine Interfaces Speaker: |

Understanding Workshop - Tuesday

11:00 - 12:30

Chair:

Vivien Lohmer

Room: L2.202

Measuring the Progress of Understanding of the Explainee via Substantive Contributions in Explanatory Dialogues Speaker: |

Approaches of Assessing Understanding Using Video-Recall Data Speaker: |

Measuring Intra-Individual Differences in Signals of Understanding Speaker: |

Parallel Research Track 2 - Tuesday

13:45 - 15:15

| Developing Instruments and Models for Measuring Understanding | Classification of Understanding and Human Oversight | Psychological and Cognitive Science View on XAI |

|---|---|---|

Chair: Room: L2.202 | Chair: Room: L2.201 | Chair: Room: L1.201 |

Unraveling the Relationship Between Explanation as a Process and Understanding. Using the Block Model as Holistic Framework for Understanding Explainable AI Speaker: | Towards a BFO-based Ontology of Understanding Explanations Speaker: | Mental Model Disparity and its Effect on User Understanding and Satisfaction in XAI Speaker: |

Conceptualization of Subjective Understanding towards Scale Development Speaker: | Beyond Understanding. Towards a Comprehensive Measure of Human Oversight in AI Speaker: | A Machine Learning Approach to the Prediction of Individual Differences in Psychological Reactivities Speaker: |

A Communication Architecture for Measuring Understanding Speaker: | Understanding as an Interactive Precondition and a Problem: Securing of Understanding in Calls to the Ministry for State Security of the GDR Speaker: | Do Humans and CNN Better Understand the Visual Explanations Generated by other Humans or XAI Algorithms? Speaker: |

Meta AI Literacy Scale: Development and Testing of an AI Literacy Questionnaire Based on Well-Founded Competency Models and Psychological Change and Meta-Competencies Speaker: | Unfooling SHAP and SAGE: Knockoff Imputation for Shapley Values Speaker: | Shedding Light: A Survey of Concept-Based Explainable AI Speaker: |

Parallel Research Track 3 - Tuesday

15:45 - 16:30

| Evaluation of Explanations | On the Interpretation of XAI Results |

|---|---|

Chair: Room: L2.202 | Chair: Room: L2.201 |

Modeling the Quality of Dialogical Explanations Speaker: | Pitfalls of Interpreting the Shapley Value in Explainable AI Speaker: |

Compare-xAI: Toward Unifying Functional Testing Methods for Post-hoc XAI Algorithms into a Multi-dimensional Benchmark Speaker: | On the Confounding Roles of Explanation Faithfulness and Intuitivity in Measuring Understanding Speaker: |

Sylvaine Tuncer, Keynote Speaker

Keynote Title

Unpacking understanding in interaction: Video studies of technologies in use

Abstract

In this talk, I’ll show how qualitative video analysis can respond to some of the methodological challenges in studying ‘understanding’ and ‘explanation’ in interaction. I’ll briefly present the approach drawing on ethnomethodology and conversation analysis, which has been extensively applied to study human-machine interaction and contributed to the development of interdisciplinary fields such as HCI and CSCW.

Then, I will draw on past and current empirical studies undertaken with colleagues to discuss current topics and foundational concepts and suggest ways to re-specify understanding and explanation as continuous, collaborative accomplishments. I will unpack how, for example, recipient design in the shaping of embodied action, through its publicly available features, sheds light on participants’ understanding of each other’s emerging conduct and interactional competencies. I will also discuss what different data collection methods allow; and conclude with remaining challenges and future directions in the light of current developments of technologies in specialised settings.

Niels Taatgen, Keynote Speaker

Keynote Title

Cognitive skills: the building blocks of human intelligence

Abstract

Humans have the amazing capacity to perform new tasks with little or no instruction. To explain this remarkable ability, I propose that people, when faced with a new task, compose the necessary knowledge for that task using cognitive skills as building blocks. In our cognitive modeling research, we have shown how a small set of skills can instantiated into a variety of task models, and provide explanations for phenomena such as attentional blink and task switching costs without having to rely on assumptions about limitations of the brain.

If cognitive skills are the building blocks of cognition, is important to be able to identify them, and study how they are learned. To identify cognitive skills, we use a hybrid approach, in which we use bottom-up machine learning methods to use individual differences in student performance to construct a knowledge graph, in which each node represents a combination of skills, and a possible knowledge state of the student.

As a pilot, we constructed a knowledge graph for an arithmetic course in the mid-level vocational education (MBO) in the Netherlands. The basis for this graph was an math entry test, which, according to the publisher, addressed several specific topics, such as length measurements, weight, clock time, etc. However, when we constructed a knowledge graph from data from 2480 students, we found that students do not differ on mastery of those topics, but rather on more general underlying skills, such as general arithmetic skills, reading skills and multi-step reasoning.

In order to assess whether the analysis of learning materials can improve learning, I will report on a pilot study where we give advice to students on the basis of their knowledge state.

General questions go to conference@trr318.uni-paderborn.de,

media enquiries to communication@trr318.uni-paderborn.de.

Access

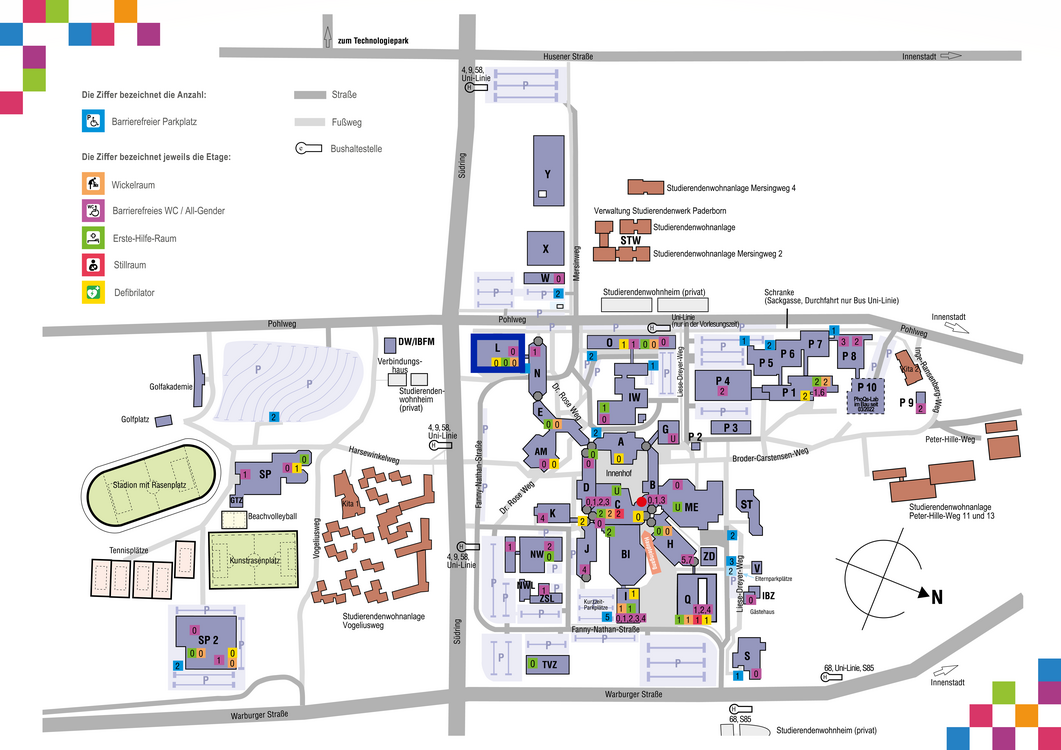

By public transport: Any of the following bus lines take you from Paderborn main train to the university campus: Line "UNI", line 4 direction "Dahl", Line 9 direction "Kaukenberg" to "Uni/Südring", line 68 direction "Schöne Aussicht" to "Uni/Schöne Aussicht". Further Information and bus schedules: www.padersprinter.de

By road: Paderborn is situated along the A33 motorway and can be reached via the A2 or A44 motorways. Take the exit "Paderborn Zentrum" and then follow the signs to "Universität" along the B64.

By air: The airport Paderborn-Lippstadt is located 20 km from Paderborn an can be reached by bus (Lines 400 and S60 BBH). The closest international airports are Frankfurt, Düsseldorf, and Hanover. Further information and flight schedules: www.airport-pad.com

The conference takes place in the L building of Paderborn University, marked in the map below.

Links

Further information on the conference