Developing Explanations Together

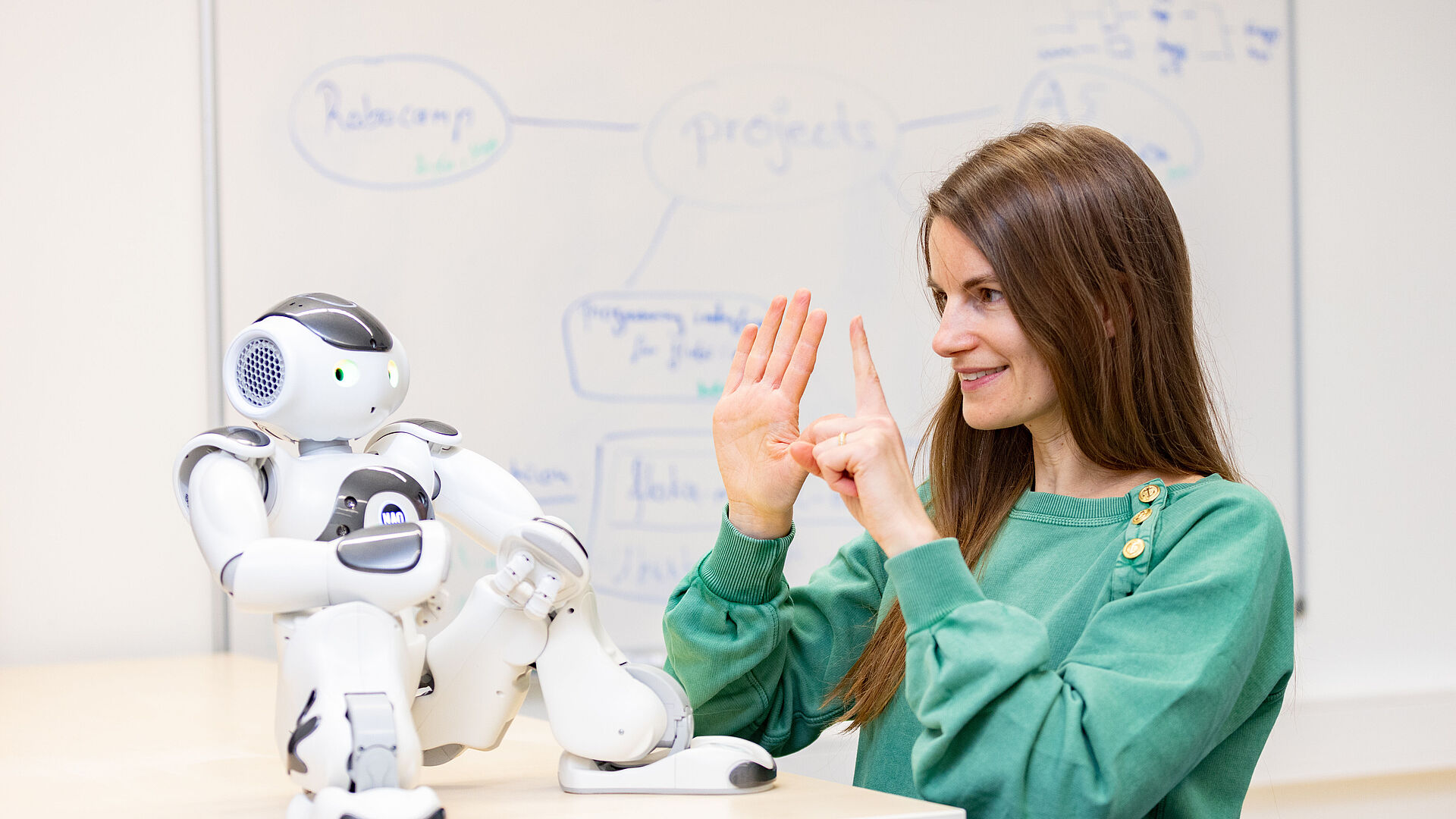

Algorithmic approaches such as machine learning are becoming increasingly complex. This growing opacity makes it difficult to understand and accept the decisions proposed by artificial intelligence (AI). In the Collaborative Research Center/Transregio 318 “Constructing Explainability,” researchers are developing ways to actively involve users in the explanation process, thereby enabling co-constructive explanations. Since 2021, the interdisciplinary research team has been investigating the principles, mechanisms, and social practices of explanation and how these can be taken into account in the design of AI systems. The aim of the project is to provide users with relevant information in such a way that they can make informed decisions and participate actively and critically in a digital society.

Publications

All TRR 318 publications at a glance: specialist articles, conference papers, and conference abstracts.

Members

The co-construction of explanations is investigated by a total of 23 project leaders with about 40 research assistants from computer science, economics, linguistics, media science, philosophy, psychology and sociology at the Bielefeld and Paderborn universities.

Research Training Group

AI Forum

Media

Management Team

Spokesperson - Project Leader A01, A05, Z

Office: TP6.2.304

Phone: +49 5251 60-5717

E-mail: katharina.rohlfing@uni-paderborn.de

Web: Homepage

Deputy Spokesperson - Project Leader B01, C05

Phone: +49 521 10612249

E-mail: cimiano@techfak.uni-bielefeld.de

Member - Project Leader B06

Office: N2.304

Phone: +49 5251 60-2432

E-mail: suzana.alpsancar@uni-paderborn.de

E-mail: lehrealp@mail.uni-paderborn.de

Web: Homepage

Member - Project Leader A01, C05

Phone: +49 521 10612144

E-mail: skopp@techfak.uni-bielefeld.de

Member - Project Leader A03, C02

Office: Q3.301

Phone: +49 5251 60-2080

E-mail: kirsten.thommes@uni-paderborn.de

Web: Homepage

Member - Project Leader C04, C07

Phone: +49 511 76212377

E-mail: h.wachsmuth@ai.uni-hannover.de

Member - Project Leader A03, A05, WIKO

Phone: +49 521 10667885

E-mail: bwrede@techfak.uni-bielefeld.de

Member - Postdoctoral Researcher A01

Office: ZM2.B.02.26

E-mail: josephine.beryl.fisher@uni-paderborn.de

Web: Homepage

Manager - Employee Z - Research Management

Office: ZM2.B.02.01

Phone: +49 5251 60-4493

E-mail: ronja.hannebohm@uni-paderborn.de

E-mail: management@trr318.uni-paderborn.de

Web: Homepage

Continue reading: