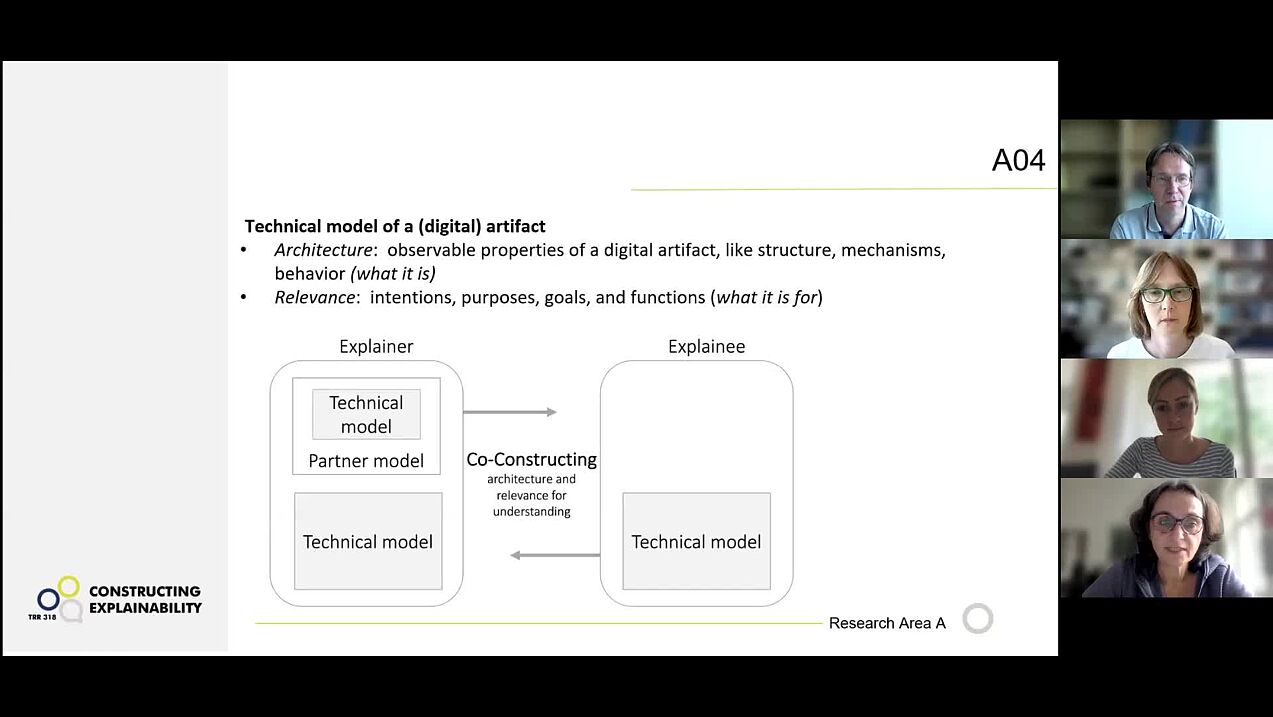

Project A04: Co-constructing duality-enhanced explanations

Project A04 investigates the different perspectives on the contents of explanations, i.e. what an explanation is about, and how it may change in the course of an explanatory interactive dialogue. An explanation about a technical artifact (which might be a hammer as well as a self-tracking device) can encompass two different perspectives: On the one hand, explainers can describe the (im)material properties of the artifact, i.e., its architecture; on the other hand, explainers may provide reasons for why something is the way it is, and what goals can be pursued with it; thus describing its relevance.

After examining this duality in the first funding phase using the example of a board game, researchers from the fields of psychology, linguistics, and computer science education are now turning their attention to digital artifacts in the second funding phase. Their goal is to develop a educationally optimized, context-sensitive and dynamic heuristic that takes both aspects, i.e., architecture and relevance, into account. This should enable people who are being explained something to actively participate in shaping the explanations—in the long term, also in interaction with explainable artificial intelligence (XAI).

Research areas: Psychology, Linguistics, Computer science education

Support Staff

Martina Drechsler, Paderborn University

Kenya Lorenz, Paderborn University

Former Members

Lutz Terfloth, Research associate

Posters

Conference-Poster presented at 4. Summer School on Social Human-Robot Interaction with the title "How to End an Exlpanation" by Vivien Lohmer.

Conference-Poster presented at International Symposium for Multimodal Communication 2024 with the title "The Role of Interactive Gestures in Explanatory Interactions" by Vivien Lohmer and Friederike Kern.

Conference poster presented at the International Symposium for Multimodal Communication 2023 on the topic " Explaining the Technical Artifact Quarto! How Gestures are used in Everyday Explanations" by Vivien Lohmer, Lutz Terfloth and Friederike Kern.

Publications

Components of an explanation for co-constructive sXAI

A.-L. Vollmer, H.M. Buhl, R. Alami, K. Främling, A. Grimminger, M. Booshehri, A.-C. Ngonga Ngomo, in: K.J. Rohlfing, K. Främling, B. Lim, S. Alpsancar, K. Thommes (Eds.), Social Explainable AI, Springer, 2026, pp. 39–53.

Models of the situation, the explanandum, and the interaction partner

H.M. Buhl, A.-L. Vollmer, R. Alami, M. Booshehri, K. Främling, in: K.J. Rohlfing, K. Främling, B. Lim, S. Alpsancar, K. Thommes (Eds.), Social Explainable AI, Springer, 2026, pp. 269–295.

Adaptation

H.M. Buhl, B. Wrede, J.B. Fisher, M. Matarese, in: K.J. Rohlfing, K. Främling, B. Lim, S. Alpsancar, K. Thommes (Eds.), Social Explainable AI, Springer, 2026, pp. 247–267.

Bridging the Dual Nature: How Integrated Explanations Enhance Understanding of Technical Artifacts

L. Terfloth, H.M. Buhl, V. Lohmer, M. Schaffer, F. Kern, C. Schulte, Bridging the Dual Nature: How Integrated Explanations Enhance Understanding of Technical Artifacts, 2026.

Retrospective video recall for analyzing cognitive processes in naturalistic explanations

S.T. Lazarov, M. Schaffer, V. Gladow, H. Buschmeier, H.M. Buhl, A. Grimminger, (n.d.).

Navigating the dual nature: do explainers adapt to explainee interests when explaining technical artifacts

T. Lutz, H.M. Buhl, V. Lohmer, F. Kern, M.E. Schaffer, C. Schulte, International Journal of Technology and Design Education (2026).

Investigating Co-Constructive Behavior of Large Language Models in Explanation Dialogues

L. Fichtel, M. Spliethöver, E. Hüllermeier, P. Jimenez, N. Klowait, S. Kopp, A.-C. Ngonga Ngomo, A. Robrecht, I. Scharlau, L. Terfloth, A.-L. Vollmer, H. Wachsmuth, ArXiv:2504.18483 (2025).

Investigating Co-Constructive Behavior of Large Language Models in Explanation Dialogues

L. Fichtel, M. Spliethöver, E. Hüllermeier, P. Jimenez, N. Klowait, S. Kopp, A.-C. Ngonga Ngomo, A. Robrecht, I. Scharlau, L. Terfloth, A.-L. Vollmer, H. Wachsmuth, in: Proceedings of the 26th Annual Meeting of the Special Interest Group on Discourse and Dialogue, Association for Computational Linguistics, Avignon, France, n.d.

The Dual Nature as a Local Context to Explore Verbal Behaviour in Game Explanations

J.B. Fisher, L. Terfloth, in: Proceedings of the 29th Workshop on the Semantics and Pragmatics of Dialogue (SemDial 2025), 2025.

Explanation needs and ethical demands: unpacking the instrumental value of XAI

S. Alpsancar, H.M. Buhl, T. Matzner, I. Scharlau, AI and Ethics 5 (2025) 3015–3033.

Forms of Understanding for XAI-Explanations

H. Buschmeier, H.M. Buhl, F. Kern, A. Grimminger, H. Beierling, J.B. Fisher, A. Groß, I. Horwath, N. Klowait, S.T. Lazarov, M. Lenke, V. Lohmer, K. Rohlfing, I. Scharlau, A. Singh, L. Terfloth, A.-L. Vollmer, Y. Wang, A. Wilmes, B. Wrede, Cognitive Systems Research 94 (2025).

Perception and Consideration of the Explainees’ Needs for Satisfying Explanations

M.E. Schaffer, L. Terfloth, C. Schulte, H.M. Buhl, in: Paderborn University, Paderborn, Germany, 2024.

Explainers’ Mental Representations of Explainees’ Needs in Everyday Explanations

M.E. Schaffer, L. Terfloth, C. Schulte, H.M. Buhl, in: Joint Proceedings of the XAI-2024 Late-Breaking Work, Demos and Doctoral Consortium. 3793, Paderborn University, Paderborn, Germany, 2024.

The mental representation of the object of explanation in the process of co-constructive explanations

M. Schaffer, H.M. Buhl, in: U. Ansorge, B. Szaszkó, L. Werner (Eds.), 53rd DGPs Congress - Abstracts, 2024.

The role of interactive gestures in explanatory interactions

V. Lohmer, F. Kern, in: Second International Multimodal Communication Symposium (MMSYM) - Book of Abstract, 2024.

Approaches of Assessing Understanding Using Video-Recall Data

S.T. Lazarov, M. Schaffer, E.K. Ronoh, in: 2023.

Erklärungsverläufe und -inhalte aus Sicht Erklärender - eine qualitative Studie

M. Schaffer, H.M. Buhl, in: 2023.

Adding Why to What? Analyses of an Everyday Explanation

L. Terfloth, M. Schaffer, H.M. Buhl, C. Schulte, in: Springer, Cham, 2023.

Explaining the Technical Artifact Quarto!: How Gestures are used in Everyday Explanations

V. Lohmer, L. Terfloth, F. Kern, in: First International Multimodal Communication Symposium - Book of Abstract, 2023.

Die Anpassungen von Erklärungen an das Verständnis des Erklärgegenstandes der Gesprächspartner

M. Schaffer, B. Lea, C. Schulte, H.M. Buhl, in: C. Bermeitinger, W. Greve (Eds.), 52nd DGPs Congress - Abstracts, 2022.

Exploring monological and dialogical phases in naturally occurring explanations

J.B. Fisher, V. Lohmer, F. Kern, W. Barthlen, S. Gaus, K. Rohlfing, KI - Künstliche Intelligenz 36 (2022) 317–326.

Understanding and Explaining Digital Artefacts - the Role of a Duality (Accepted Paper - Digital Publication Follows)

L. Budde, C. Schulte, H.M. Buhl, A. Muehling, Seventh International Conference on Learning and Teaching in Computing and Engineeringe (2020).

Show all publications