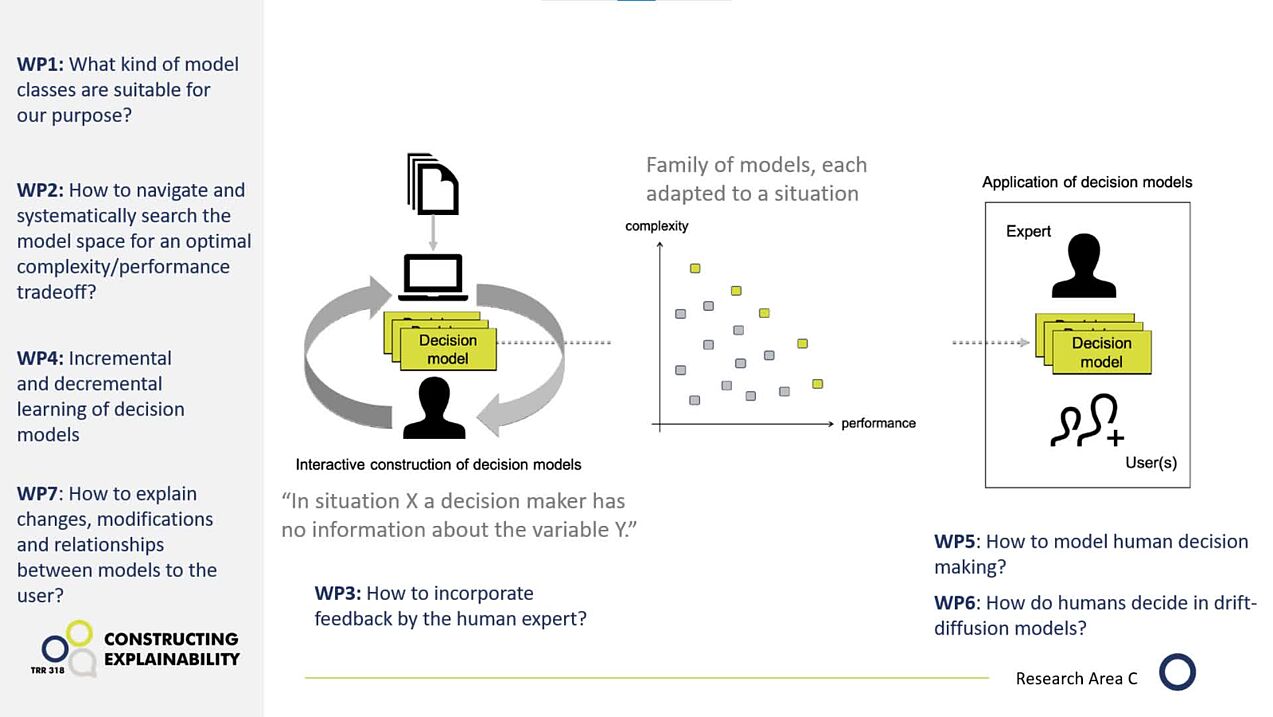

Project C02: Interactive learning of explainable, situation-adapted decision models

Different situations require different strategies for machine-based decision-making. In project C02, researchers from computer science and economics develop prescriptive models that provide concrete recommendations for achieving the best possible outcomes. They also examine when and by whom new test cases should be selected—by the AI, humans, or collaboratively—to strategically expand the knowledge base and improve learning. This approach is referred to as exploration and aims to achieve better results in the long term.

The goal is to empower decision-makers to better integrate the AI's recommendations with their own knowledge, enabling them to make informed and effective decisions.

Research areas: Computer science, Economics

Associate Member

Jonas Hanselle, Paderborn University

Support Staff

Nils Bojack, Paderborn University

Julia Rustemeier, Paderborn University

Luca Manuel Siekermann, Paderborn University

Former Members

Dr. Michael Rapp, Research associate

Stefan Heid, Research associate

Publications

Generation of Explanatory Content and Requirements for Social XAI

K. Främling, K. Thommes, B. Wrede, in: Social Explainable AI, Springer Nature Singapore, Singapore, 2026.

Measuring the Outcome of sXAI

K. Thommes, in: Social Explainable AI, Springer Nature Singapore, Singapore, 2026.

Operationalizing Social Interaction

H. Wachsmuth, K. Thommes, M. Alshomary, in: Social Explainable AI, Springer Nature Singapore, Singapore, 2026.

Exploration of Explaining Content

K. Främling, B. Wrede, K. Thommes, in: Social Explainable AI, Springer Nature Singapore, Singapore, 2026.

Interaction History in Social XAI

K. Thommes, K. Främling, B. Wrede, S. Kubler, in: Social Explainable AI, Springer Nature Singapore, Singapore, 2026.

Evaluation Principles

K. Thommes, in: Social Explainable AI, Springer Nature Singapore, Singapore, 2026.

Algorithm, expert, or both? Evaluating the role of feature selection methods on user preferences and reliance

J. Kornowicz, K. Thommes, Plos One (2025).

Would I regret being different? The influence of social norms on attitudes toward AI usage

J. Kornowicz, M. Pape, K. Thommes, Arxiv (2025).

MSL: Multi-class Scoring Lists for Interpretable Incremental Decision-Making

S. Heid, J. Kornowicz, J. Hanselle, K. Thommes, E. Hüllermeier, in: Communications in Computer and Information Science, Springer Nature Switzerland, Cham, 2025.

An Empirical Examination of the Evaluative AI Framework

J. Kornowicz, International Journal of Human–Computer Interaction (2025) 1–19.

Learning decision catalogues for situated decision making: The case of scoring systems

S. Heid, J.M. Hanselle, J. Fürnkranz, E. Hüllermeier, International Journal of Approximate Reasoning 171 (2024).

Learning decision catalogues for situated decision making: The case of scoring systems

S. Heid, J.M. Hanselle, J. Fürnkranz, E. Hüllermeier, International Journal of Approximate Reasoning 171 (2024).

Human-AI Co-Construction of Interpretable Predictive Models: The Case of Scoring Systems

S. Heid, J. Kornowicz, J.M. Hanselle, E. Hüllermeier, K. Thommes, in: PROCEEDINGS 34. WORKSHOP COMPUTATIONAL INTELLIGENCE, 2024, p. 233.

Towards a Computational Architecture for Co-Constructive Explainable Systems

H. Buschmeier, P. Cimiano, S. Kopp, J. Kornowicz, O. Lammert, M. Matarese, D. Mindlin, A.S. Robrecht, A.-L. Vollmer, P. Wagner, B. Wrede, M. Booshehri, in: Proceedings of the 2024 Workshop on Explainability Engineering, ACM, 2024, pp. 20–25.

The Role of Response Time for Algorithm Aversion in Fast and Slow Thinking Tasks

A. Lebedeva, J. Kornowicz, O. Lammert, J. Papenkordt, in: Artificial Intelligence in HCI, 2023.

Aggregating Human Domain Knowledge for Feature Ranking

J. Kornowicz, K. Thommes, Artificial Intelligence in HCI (2023).

Probabilistic Scoring Lists for Interpretable Machine Learning

J.M. Hanselle, J. Fürnkranz, E. Hüllermeier, in: Discovery Science, Springer Nature Switzerland, Cham, 2023.

Comparing Humans and Algorithms in Feature Ranking: A Case-Study in the Medical Domain

J.M. Hanselle, J. Kornowicz, S. Heid, K. Thommes, E. Hüllermeier, in: M. Leyer, J. Wichmann (Eds.), LWDA’23: Learning, Knowledge, Data, Analysis. , 2023.

Show all publications